AI character voices are exactly what they sound like: synthetically generated audio that gives personality and emotion to digital characters in videos, games, and apps. This technology is a scalable and consistent alternative to traditional voice acting, letting creators churn out content faster and adapt it for global audiences with impressive efficiency.

The New Voice of Modern Content Creation

AI character voices aren't some far-off concept anymore—they're a practical tool that's actively reshaping video production right now. For publishers and brands, this is about more than just basic text-to-speech. It’s a way to scale up content creation without the logistical nightmare of scheduling studio time or managing human voice actors.

This shift brings a level of consistency that was previously unheard of. Think about a character in a long-running video series. With AI, their voice can stay absolutely identical across dozens of episodes produced over months, which is a real challenge with human actors who might get sick or have scheduling conflicts.

From Robotic Speech to Emotional Storytelling

Getting to today’s incredibly realistic AI voices has been a long road. The journey started way back in 1939 with Bell Labs' VODER, the first electronic speech synthesizer. Much later, gadgets like the Speak & Spell brought text-to-speech into our homes in the 1970s. A massive leap forward came in 1984 with DECtalk, which famously gave a voice to Stephen Hawking and cemented the technology's place in our culture.

Today’s tools are built on neural networks that can actually interpret and deliver emotional nuance. It's this evolution that makes AI character voices a strategic asset. The benefits for any video workflow are crystal clear:

- Scalability: You can generate voiceovers for hundreds of videos at once, without booking a single recording session.

- Cost Efficiency: Say goodbye to hefty expenses from studio rentals, audio engineers, and talent fees.

- Rapid Localization: Translate and generate voiceovers in multiple languages in a fraction of the time it takes for traditional dubbing.

- Brand Consistency: Lock in a uniform vocal identity for your branded characters or narrators across every single platform.

The real game-changer isn't just swapping out a voice. It's about building a consistent, scalable audio identity for a brand or character that can be deployed instantly, in any piece of content, anywhere in the world.

This capability completely changes how content teams can approach production. If you want to dive deeper into the visual side of things, it’s worth exploring AI avatar videos, as they often go hand-in-hand with AI voices. By combining these technologies, creators are building immersive digital experiences that were once far too expensive or complicated to produce at scale.

How to Cast Your Perfect AI Voice Actor

Finding the right AI voice is so much more than just picking one that sounds pleasant. It’s a genuine casting process. You need a voice that nails your character's personality, fits your brand’s identity, and helps tell the story you're trying to tell. Get this wrong, and you can break that connection with your audience in an instant.

The first move is always to create a "voice persona" brief. This document becomes your North Star, guiding every decision you make from here on out. It should detail all the core attributes you're looking for, going way beyond simple descriptions like "male" or "female" and digging into the real nuances of the performance.

Defining Your Voice Persona

Think of this like a character sheet for an actor. Your brief needs to answer specific questions to build a crystal-clear picture of the ideal voice.

A great starting point is to nail down the fundamentals:

- Age and Gender: Is the character young and full of energy, or are they more mature and wise?

- Pacing and Cadence: Do they speak in a rush, or are they slow and deliberate? A tech tutorial probably needs a clear, moderately paced voice, while a thriller podcast might demand something faster and more dynamic.

- Accent and Dialect: A specific accent can instantly establish a character's background and add a crucial layer of authenticity.

- Clarity and Articulation: Is the voice crisp and easy to follow, or does it have a softer, more conversational feel? A voice for a children's story, for example, has to be exceptionally clear.

With these basics sorted, you can dive deeper into the emotional range. Does your AI character need to sound excited, empathetic, or authoritative? Modern AI character voices give you a surprising amount of emotional control, but you have to know what you need upfront.

A voice persona brief isn't just a technical checklist; it’s a creative tool. It forces you to think deeply about how your character should feel to the audience, which is the key to creating a believable and engaging performance.

This table is a handy reference for building out your own voice persona brief.

AI Voice Casting Criteria

Using these criteria will help you move from a vague idea to a concrete, actionable plan for your ideal voice.

Choosing Your Voice Sourcing Method

Once your brief is locked in, you have three main ways to find your perfect AI voice. Each has its own pros and cons depending on your project's scope, budget, and how unique you need the voice to be.

-

Stock Voice Libraries: Platforms like ElevenLabs or Murf.ai offer massive selections of pre-built voices. This is easily the fastest and most cost-effective route, making it perfect for projects with tight turnarounds, like social media content or internal training videos.

-

Voice Cloning: This process involves creating a digital replica of a specific human actor's voice. It delivers a completely unique and proprietary sound, ensuring your brand or character has a one-of-a-kind audio identity. For publishers digging into this, our guide on the best AI voice clone tools breaks down the top options out there.

-

Custom Synthetic Voices: This is the most advanced path, where a totally new voice is designed from the ground up by blending different vocal characteristics. It gives you the ultimate creative control but demands a serious investment in both time and resources.

Your choice here directly shapes your workflow. A stock voice can get you up and running in minutes. Cloning requires getting consent and working with a voice actor. A fully custom voice is a major project, but the payoff is a sonic brand that is truly and completely yours.

Generating and Refining a Lifelike Performance

Once you've picked your AI voice, the real creative work begins. Generating the audio is just the starting point; the magic happens when you start refining that raw output until it feels genuinely human.

Simply pasting a script and hitting "generate" almost always results in a flat, uninspired delivery. It's a dead giveaway that the voice is synthetic, and it's the fastest way to lose your audience.

Modern AI voice platforms give you a whole suite of intuitive controls to shape the performance. The most basic and impactful adjustments are always pitch, speed, and pauses. Lowering the pitch can add a sense of gravitas, while a slightly faster speed can inject some excitement.

And don't underestimate the power of pauses. Strategically adding even a quarter-second beat after a key phrase mimics natural human breathing and thought, instantly making the dialogue more believable.

Mastering Performance with SSML

To unlock a truly professional level of control, you need to get comfortable with SSML (Speech Synthesis Markup Language). I know, it sounds technical, but it’s basically just a set of simple tags you can drop into your script to direct the AI’s performance with incredible precision. It’s the difference between being a passenger and actually taking the wheel.

With SSML, you can fine-tune aspects that the standard controls just can't touch:

- Emphasis: Use tags to stress specific words or even syllables, which can shift the entire meaning of a sentence. For instance, emphasizing "you" in "Is that what you think?" creates a completely different emotional tone than emphasizing "think."

- Pronunciation: If the AI stumbles on a brand name, some industry jargon, or a unique name, you can feed it a phonetic spelling. This ensures it’s pronounced perfectly every single time, which is non-negotiable for brand consistency.

- Emotional Inflection: While basic emotion selectors are a good start, SSML helps you add much more subtle layers. You can learn more about enhancing digital voices with text-to-speech emotion to really bring your characters to life.

This granular control over AI character voices has come a long, long way. The big shift away from robotic speech really picked up steam with assistants like Siri and Alexa, which brought contextual awareness to the mainstream.

This progress has led to some mind-blowing technologies, like Microsoft's VALL-E, which can replicate a person’s voice and emotional tone from just a three-second audio clip. It really shows the leap we've made from simple commands to emotionally aware AI. If you're curious, you can discover more about the invention of AI voices on podcastle.ai.

Pro Tips for Authentic Delivery

From my experience, achieving a lifelike performance often comes down to clever workflow habits and embracing a little bit of imperfection.

Instead of generating an entire script in one go, break it down. Work in smaller, more manageable chunks—a paragraph or even a single sentence at a time. This approach gives you far more control to tweak the delivery of each little segment before you stitch them all together.

The secret to making AI voices sound human is to add human-like imperfections. A perfectly paced, flawless delivery can sound sterile. Introducing tiny hesitations or slight variations in speed makes the performance feel organic and spontaneous.

Think about how people actually talk. We rarely speak in perfectly formed, evenly paced sentences. We pause to think, we speed up when we're excited, and we place emphasis on words to make a point. Replicating these natural inconsistencies is what elevates an AI voice from just a tool to a true performance.

Integrating AI Audio Into Your Video Workflow

Getting your generated audio into a video project is a blend of technical know-how and creative finesse. It’s not just about dragging a file into your timeline. The real goal is to make the AI voice feel like it belongs there—a natural, intentional part of the final cut.

Your first decision point is a technical one: what audio format should you export?

For almost any serious project, a WAV file is your best bet. WAVs are uncompressed, which means they pack the highest possible audio quality. That fidelity is crucial when you get to the mixing and mastering stage. If you're just whipping up a quick social media clip where file size is a bigger deal, a high-bitrate MP3 (320 kbps) will do the job just fine.

Syncing Audio and Visuals Perfectly

With the audio file imported into your editing software, the next hurdle is synchronization. You want the narration to feel connected to what's happening on screen, not just slapped on top. This is all about timing key phrases to land perfectly with visual cues—an animation, a character's movement, or a text overlay popping up.

A classic rookie mistake is to generate one long, monolithic audio track and then try to chop up your visuals to fit it. Flip that process on its head for a much smoother workflow:

- Pace the Visuals First: Start by getting a rough cut of your video. Figure out the rhythm and identify where your key moments are.

- Generate Audio in Chunks: Instead of one long script, create your voiceover in smaller, logical segments that match up with your scenes or even paragraphs.

- Align and Adjust: Now, drop these smaller audio clips into your timeline and nudge them into place. This approach gives you the breathing room to add pauses or trim a few seconds without having to re-generate the entire voiceover.

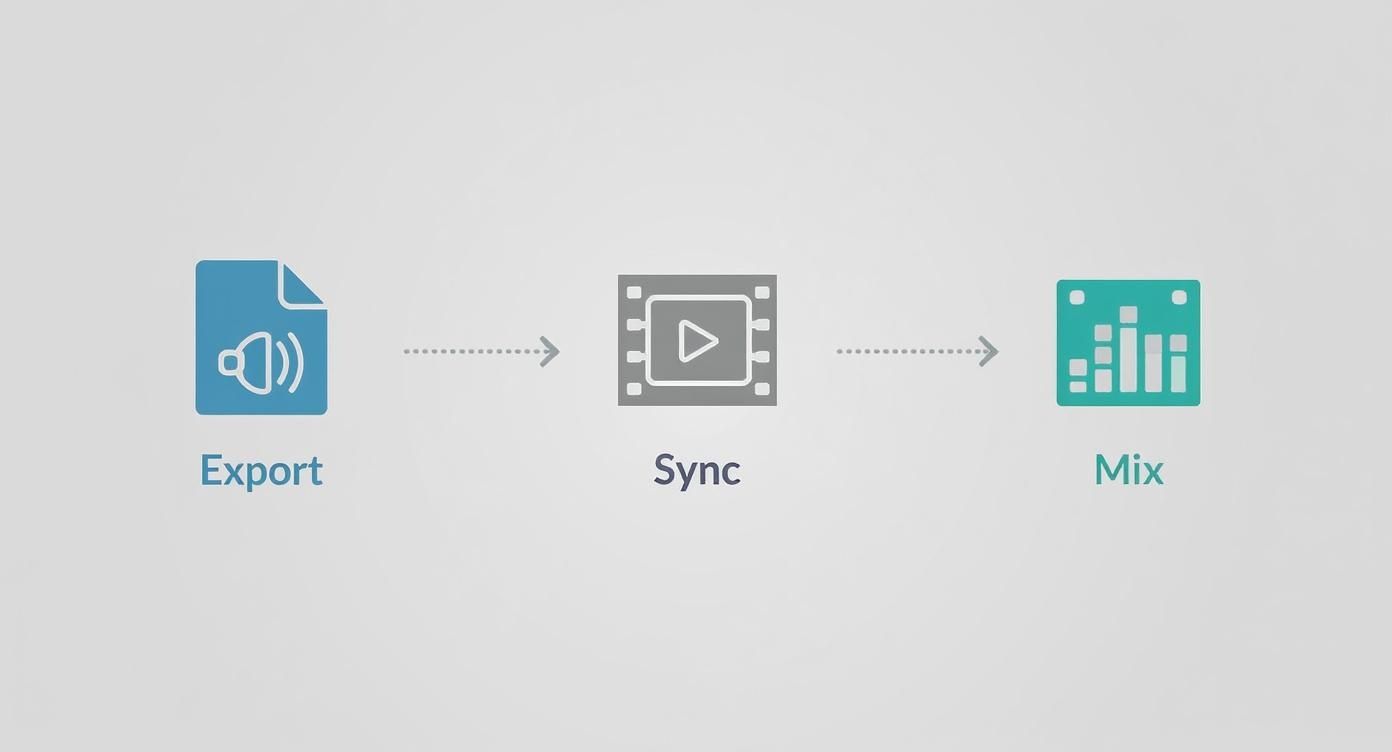

This simple diagram breaks down the core stages of the audio integration process.

As you can see, it flows from exporting high-quality audio to syncing with visuals and, finally, mixing everything into a polished soundscape. That last mixing stage is often what separates the amateur stuff from a professional result.

The best AI voice integration is one the audience never even notices. If the audio blends seamlessly with the music, sound effects, and visuals, you've nailed it.

Of course, if you have characters speaking on-screen, nailing a perfect lip-sync is a whole different ballgame. To dive deeper into that specific challenge, check out our guide on mastering AI lip sync for truly believable character performances.

Polishing the Final Sound Mix

Don't skip the final audio post-production. A raw, unprocessed AI character voice can sound sterile and disconnected when dropped into a mix with music and sound effects.

A few basic audio tools will help it sit naturally. Applying a subtle EQ (equalizer) can shave off any harsh frequencies, while a bit of light compression will even out the volume, keeping the voice consistently clear and present.

For publishers churning out content at scale, this is where templates become a lifesaver. Building presets in your editing software, whether it's Premiere Pro or Final Cut Pro, is a massive time-saver. A well-designed template with pre-configured audio tracks and effects ensures every single video maintains a consistent, high-quality audio signature without a ton of manual tweaking.

Navigating the Ethics of Synthetic Voices

Using AI character voices is about more than just technology; it’s about doing it responsibly. As these tools get more powerful and easier to find, we have to think carefully about the ethical lines we’re drawing. At the end of the day, it all comes down to maintaining trust with your audience and respecting people's rights.

Transparency should be your starting point. While there isn't a single rulebook just yet, being upfront about using an AI-generated voice is always the best move, especially when your audience expects authenticity. Think about it: a synthetic narrator for a cartoon is one thing. But using an AI voice in a documentary or a news report, where people assume a human is speaking, is a completely different ballgame.

Understanding Legal and Ownership Rights

The legal side of synthetic voices is still catching up to the tech, but two things need your immediate attention: voice cloning consent and platform usage rights. These are not areas where you want to wing it.

When it comes to cloning a specific person’s voice, the rule is crystal clear and non-negotiable: you absolutely must have their explicit, informed consent. Taking someone's voice without their permission is a massive ethical overstep and can land you in serious legal hot water. This isn't a handshake deal; it needs to be a documented agreement spelling out exactly how that voice clone will be used.

A person's voice is a core part of their identity. Using a digital replica without their express permission is not just a legal risk; it's a fundamental violation of their personal rights. Always prioritize clear, written consent before starting any voice cloning project.

On top of that, every AI voice platform you use will have its own terms of service that lay out the rules on ownership and usage. Before you get too invested in a tool, you need to dig in and find out:

- Who actually owns the audio you generate? Do you get full commercial rights, or are there strings attached?

- Can you use the voice on any platform you want? Some services might restrict use to certain channels.

- What are the rules for custom voice clones? Make sure the platform’s policies don't clash with the agreement you have with your voice actor.

The Rapid Evolution of Voice Technology

The explosion in accessibility for this technology has been incredible. Just look at the commercial journey of voice tech to see how fast things have moved. Back in 1990, a consumer speech-to-text product like Dragon Dictate would set you back around $6,000. Fast forward to today, and neural network-driven voice cloning can create amazingly realistic results from just a tiny bit of data, opening the door for personalized AI characters in everything from entertainment to marketing.

This shift represents a huge market transformation, turning what was once specialized, pricey tech into a tool that's now widely available for creators everywhere. You can read more about the symphony of voice technology and its evolution on hastingsnow.com.

With this power in more hands, it’s even more critical for creators to be responsible. Pushing the creative boundaries with AI voices is exciting, but doing it the right way—ethically and legally—is what will set you up for long-term success and keep that all-important trust with your audience. Always read the fine print on your platform's terms and get that consent locked down.

Got Questions About AI Character Voices? We Have Answers.

As creators dip their toes into the world of AI voices, a few questions almost always pop up. Let's tackle them head-on, so you can sidestep common frustrations and make smarter choices for your video projects right from the start.

Can Synthetic Voices Actually Sound Emotional?

A lot of creators wonder if these voices can genuinely handle complex emotional performances. The short answer is yes, but it’s not a one-click process. You have to get your hands dirty.

Many high-end platforms offer built-in emotional styles like 'happy,' 'sad,' or 'angry' that work pretty well out of the box. But for that next level of control, you’ll need to dive into SSML tags. These little snippets of code let you tweak pitch, rate, and emphasis on specific words, mimicking the subtle shifts in human emotion.

While it might not perfectly replicate a seasoned voice actor every single time, the technology is more than capable of producing expressive and emotionally resonant AI character voices for all kinds of projects, from animated stories to marketing videos.

What's the Single Biggest Mistake to Avoid?

Treating the first audio export as the final product. That's it. That's the biggest mistake.

Pasting a script and hitting "generate" usually results in a flat, robotic delivery that screams unnatural to a listener. The real magic, the key to a professional-sounding result, happens in the refinement stage after the initial audio is created.

This isn't just a suggestion; it's a critical part of the workflow. It involves:

- A Critical Listen: Play back the audio. Where does it sound clunky? Are there any weird pauses?

- Pacing Tweaks: Manually add or shorten pauses to build a natural, conversational rhythm.

- Pronunciation Fixes: Use phonetic inputs to correct any mangled brand names, technical jargon, or unique words.

- Inflection Control: This is where you use those SSML tags to add emphasis and emotional weight to key phrases, making the performance pop.

Skipping this refinement stage is what separates an amateur-sounding AI voiceover from one that feels polished and truly engaging. It’s the difference between using a tool and directing a performance.

How Do I Make Sure the Voice Matches My Brand?

Consistency is everything when it comes to building a recognizable audio brand. It all starts with creating a simple 'brand voice guide' that clearly outlines your desired vocal personality. Are you going for authoritative and buttoned-up, or something more friendly and approachable?

A brand's audio identity is built on repetition and consistency. Just as you use the same logo and color palette, using the same vocal signature across all your video content creates a powerful sense of recognition and trust with your audience.

Once you have this guide, use it to pick a base AI voice that fits your brand’s persona. If you’re going the voice cloning route, choose a human actor who truly embodies the identity you're trying to build.

From that point on, be disciplined. For every project, stick to the same voice settings (like pitch and speed) and apply consistent audio post-processing, like EQ and compression. This disciplined approach ensures your audience immediately recognizes your brand's unique sound, no matter where they find your content. Over time, that consistency builds a powerful and memorable connection.

Ready to create high-quality, scalable video content with consistent and compelling AI voices? Aeon automates the entire production process, allowing you to transform your existing content into engaging videos with minimal effort. Learn how Aeon can drive engagement and revenue growth for your team.